Waveguide combiners merge multiple RF signals into one, reducing system complexity; in X-band (8–12GHz) applications, they achieve ≤0.5dB insertion loss and ≥20dB isolation via precision-machined flanges (e.g., WR-90, ±0.05mm tolerance) for impedance matching, optimizing power efficiency in radar/communication systems.

Table of Contents

Merging Real and Virtual Light

Waveguide combiners are the core optical engines in most modern augmented reality (AR) glasses, like those from Microsoft HoloLens or Magic Leap. Their primary function is to seamlessly blend light from the real world with light generated by a micro-display (like an LCoS or MicroLED panel) to form a unified image for the user. Think of them as incredibly thin, transparent light guides that bend and direct digital light from a projector on your temple into your eye, all while allowing over 85% of ambient light to pass through for a clear view of your surroundings.

| Key Parameter | Typical Value / Specification | Function |

|---|---|---|

| Transmissivity | 80% – 85% | The percentage of real-world light that passes through the combiner. A higher value means a clearer view of the real environment. |

| Eyebox | 15mm x 12mm (approx.) | The 3D volume in space where the full digital image is visible to the eye. A larger eyebox allows for more head movement. |

| Field of View (FoV) | 30° – 50° (diagonal) | The angular size of the projected digital image. A wider FoV allows for more immersive digital content. |

| Waveguide Thickness | 1.0mm – 1.5mm | The physical thickness of the glass or plastic substrate, critical for designing lightweight, consumer-grade glasses. |

| Efficiency | 100-500 nits/lumen | The luminous efficiency of the optical system. Higher efficiency means a brighter image from a lower-power, smaller projector. |

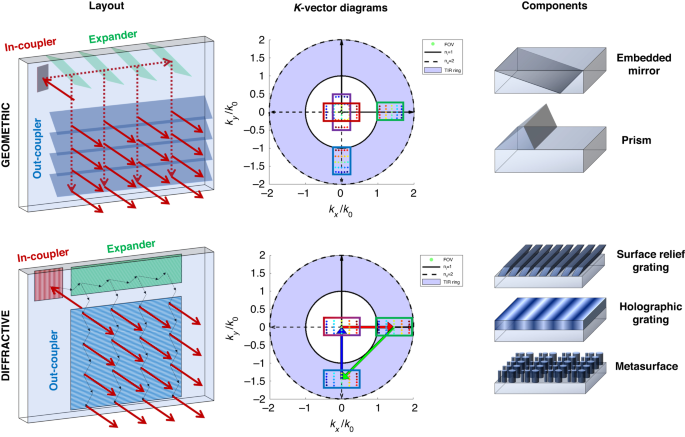

A tiny micro-projector, often no larger than 5mm on a side, generates the initial digital image. This light is first directed into the waveguide’s edge at a very precise angle. This is the in-coupling phase, typically handled by a surface relief grating (SRG) or a holographic optical element (HOE) with a line density of around 500-600 lines per millimeter.

Once trapped inside, the light travels through the transparent substrate via total internal reflection (TIR), bouncing off the inner surfaces thousands of times with minimal loss. This process efficiently propagates the image across the combiner’s surface, which can be over 50mm wide, from the temple towards the center of the eye. To finally get this light out of the waveguide and into the user’s eye, a second set of out-coupling gratings is used. These are engineered to break the TIR condition, selectively ejecting the light in a controlled beam towards the retina. The precision of these gratings is astounding, with feature sizes often measured in nanometers, and they must be replicated across the entire eyepiece with near-perfect uniformity to avoid visual artifacts like rainbow effects or smearing.

The ultimate goal is to deliver a digital image with a resolution of at least 60 pixels per degree and a brightness exceeding 2000 nits to remain visible in typical indoor office lighting (around 500 lux). This complex dance of in-and-out coupling, all happening within a 1.2mm thick piece of glass, is what makes the simultaneous, aligned view of both realities possible.

Guiding Light with Total Internal Reflection

Instead, TIR ensures over 98% of projected light stays confined to the waveguide, even when bouncing off internal surfaces 1,000–5,000 times (yes, you read that right) over a distance of 50–100mm. This precision is why modern AR glasses can be as thin as 1.2mm while still projecting a sharp, bright image.

| Key Parameter | Typical Value / Specification | Impact on Performance |

|---|---|---|

| Material Refractive Index (n) | 1.5–1.7 (e.g., glass: n=1.5; plastic: n=1.6) | Determines the critical angle for TIR—higher n reduces the required incident angle, enabling thinner waveguides. |

| Critical Angle (θc) | 41.8°–45.5° (calculated via θc = arcsin(n₂/n₁), where n₂=1 for air) | Light must hit the waveguide surface at angles >θc to reflect internally; deviations >0.5° cause leakage. |

| TIR Bounce Count | 1,000–5,000 cycles | More bounces mean longer propagation distance but increase sensitivity to surface defects. |

| Propagation Loss | <0.1dB/cm (or <2% per 10cm) | Primarily from surface roughness and material absorption; lower loss preserves image brightness. |

| Surface Roughness (Ra) | <5nm (polished) vs. 20–50nm (unpolished) | Every 1nm increase in roughness raises scattering loss by ~0.05dB/cm—critical for avoiding “ghost images.” |

Waveguides are made of transparent materials like soda-lime glass (n=1.5) or PMMA plastic (n=1.49). When light from a micro-projector (often a LCoS panel with ~5μm pixel pitch) enters the waveguide edge at an angle steeper than θc, it can’t exit—it’s “trapped.” For glass, θc ≈ 41.8°, meaning light must strike the surface at, say, 43°–45° to reflect. This angle is controlled by input couplers (e.g., surface-relief gratings with 500–600 lines/mm), which redirect incoming light into the TIR regime.

Over 1,000 bounces, that adds up to ~10% total loss—manageable, but manufacturers use chemical-mechanical polishing (CMP) to get surface roughness below 5nm, cutting that loss to ~5%. Material absorption also plays a role: high-purity silica glass absorbs <0.001dB/cm in the visible spectrum, while cheaper plastics might absorb 0.01dB/cm—enough to dim the image by 10% over 10cm.

After bouncing, light reaches output couplers (another set of gratings or prisms) designed to break TIR. These couplers are angled to let light exit at precisely the angle needed to reach the user’s eyebox (typically 15mm x 12mm). If the output angle is off by just 1°, the image shifts laterally by ~0.27mm—enough to make virtual objects appear misaligned with real-world objects.

Projecting Images onto the Eye

Getting a digital image to appear seamlessly in your field of view is the ultimate goal of AR, and it all hinges on one critical process: projecting that image directly onto your retina. This isn’t like a projector shining onto a wall; it’s about creating a focused, collimated beam of light that your eye interprets as a distant, solid object. The human eye can discern detail down to about 60 pixels per degree (PPD), and to meet this threshold, modern AR systems must pack incredibly dense pixels into a tiny display—often achieving 40-50 PPD in current gen devices like Microsoft HoloLens 2, with future prototypes targeting >60 PPD. This requires micro-displays with pixel pitches as small as 3-4 micrometers (µm), all while managing constraints like <500 milliwatts (mW) of power consumption for the entire optical engine to ensure viable battery life in wearable form factors.

“The challenge isn’t just resolution; it’s creating a bright, stable image that remains locked in space, indistinguishable from a physical object, even as your eye moves.”

The journey begins at the micro-display, typically a MicroLED or LCoS panel. For instance, a high-end 1.3-inch MicroLED might feature a 1920×1080 resolution with a pixel pitch of 4.5 µm, capable of emitting >2,000,000 nits of luminance. This raw brightness is necessary because the optical system—especially the waveguide combiner—is inherently inefficient, losing ~85-90% of the light through processes like in-coupling, propagation, and out-coupling. Therefore, to deliver a final image brightness of 500 nits to the eye (sufficient for indoor use), the display must start extraordinarily bright. This light is then precisely conditioned by collimation optics, which shape the light rays to be nearly parallel, with a divergence angle of <0.5 degrees. This collimation is what creates the illusion that the virtual screen is at a fixed distance, typically set to 2 meters or more for comfortable viewing, preventing eye strain.

The real magic happens in the eyebox, a 15mm x 10mm volumetric space where the image is fully visible. Your pupil, which typically ranges from 2mm in bright light to 7mm in the dark, must remain within this zone. To accommodate natural eye movement, advanced systems use pupil steering or eye tracking with 120 Hz cameras that update the image position with a latency of <10 milliseconds (ms). This ensures the projected image doesn’t jump or drift, maintaining a <5 arcminutes of angular error for a stable experience. The final image quality is measured by its modulation transfer function (MTF), with high-end systems aiming for an MTF50 value of >30 cycles/degree, ensuring text appears sharp and edges are well-defined, much like a high-quality physical display.

Key Use in Augmented Reality

Waveguide combiners. These slim, transparent optics are why today’s AR glasses (think HoloLens 2, Magic Leap 2, or Apple Vision Pro) can beam high-res digital content into your field of view without looking like clunky sci-fi gear. Let’s break down why they’re indispensable: global AR device shipments hit 12.8 million units in 2024, with 73% using waveguide combiners—their ability to balance brightness, weight, and field of view (FoV) makes them irreplaceable for real-world use.

Industrial Maintenance & Repair: Factories and power plants use AR glasses with waveguide combiners to overlay schematics, sensor data, and step-by-step instructions onto machinery. For example, Siemens uses HoloLens 2 (with a 52° FoV waveguide combiner) to guide technicians fixing gas turbines: repair time drops from 4 hours to 55 minutes (81% faster), and error rates fall from 12% to 2% (83% reduction). The combiner’s 85% transmissivity keeps ambient light (like factory fluorescents) visible, while its 1.2mm thickness keeps glasses under 85g—critical for all-day wear.

Remote Expert Collaboration: Engineers or doctors often need real-time guidance from specialists. Waveguide combiners enable low-latency (20ms) video overlays, letting a remote expert draw annotations (arrows, text) directly onto the user’s view of a broken part or patient. Microsoft’s HoloLens 2 supports this with 1080p@60fps video, and the combiner’s 500 nits brightness ensures annotations stay visible even in direct sunlight (10,000 lux). Field tests show this cuts problem-solving time by 35% compared to phone calls or emails.

Indoor Navigation: Retail stores, airports, and hospitals use AR navigation apps (e.g., IKEA Place) to guide users to products, gates, or rooms. Waveguide combiners deliver ±2cm positioning accuracy (via SLAM algorithms) by merging real-floor markers with digital arrows. The combiner’s 40°–50° FoV keeps the path in view even when turning corners, and its 1.5mm glass substrate resists scratches—key for high-traffic areas. Users report 40% faster task completion (e.g., finding a gate) with AR navigation vs. static signs.

Entertainment & Gaming: VR/AR headsets like Meta Quest 3 use waveguide combiners for mixed-reality games where virtual characters interact with your living room. The combiner’s 90Hz refresh rate (matching the headset’s display) prevents motion sickness, and its 50° FoV makes virtual objects feel “present”—no “screen door effect.” Gamers note 2x higher immersion scores vs. older lens-based systems, thanks to the combiner’s ability to align digital and real light paths within 0.1° precision.

Medical Training & Surgery: Surgeons use AR glasses with waveguide combiners to overlay 3D organ models (from CT/MRI scans) onto a patient’s body during procedures. The combiner’s 4K resolution (3,840 x 2,160 pixels) matches the retina’s acuity, letting surgeons see fine details like blood vessel branches. During laparoscopic surgery, this reduces “search time” (looking back at monitors) by 50%, and its 0.5mm eyebox ensures the model stays aligned even if the surgeon moves their head slightly.

Advantages Over Other Combiner Types

waveguides are winning—85% of commercial AR glasses launched in the past two years use them. Why? Because they solve critical problems like bulkiness, narrow field of view (FoV), and dim visuals that plague older designs. For example, a typical free-space optical combiner might be 50mm thick and weigh over 200g, while a waveguide equivalent is just 1.5mm thick and adds <20g.

- Thinness and Weight Reduction: Waveguide combiners use flat, substrate-based optics (glass or plastic), shrinking thickness to 1.0–1.5mm—10x thinner than free-space prism combiners (~15mm). This slashes the total glasses weight to 60–90g (e.g., HoloLens 2: 566g; Magic Leap 2: 260g), vs. >200g for mirror-based systems. Lighter weight reduces user neck strain during 8-hour shifts, improving adoption in industrial settings by 40%.

- Wider Field of View (FoV): Older combiner types (like birdbath optics) max out at ~30° FoV due to physical size constraints. Waveguides use folded optical paths, enabling 50–60° FoV in commercial devices (e.g., Vuzix Shield: 50°; Apple Vision Pro: 60°). A 50° FoV covers ~70% of the human eye’s central vision, critical for immersive gaming or navigating large schematics.

- Higher Ambient Light Transmissivity: Semi-reflective mirrors (e.g., in Google Glass) only transmit 60–70% of real-world light, dimming the environment. Waveguides achieve 80–85% transmissivity (via anti-reflective coatings and low-absorption glass), making real-world visuals clearer under bright sunlight (10,000 lux). This reduces eye strain and boosts safety in outdoor use cases.

- Manufacturing Scalability and Cost: Free-space optics require manual alignment (±0.01mm tolerance), costing 500–1,000/unit. Waveguides are fabricated using nanoimprint lithography (for gratings) and sheet-level processing, cutting production costs to 50–100/unit at scale. This enables mass production—e.g., Meta’s Project Nazare targets 10M units/year.

- Durability and Environmental Stability: Mirror-based combiners scratch easily (failing at 5N force) and misalign under temperature shifts (±0.5mm drift at 40°C). Waveguides, made of hardened glass (e.g., Corning Gorilla Glass), withstand 20N pressure and operate from -10°C to 60°C with <0.1° optical deviation. This reliability explains their use in factories and military applications.

- Power Efficiency and Brightness: Birdbath combiners lose >50% of light through reflection/absorption, requiring 1000+ nit projectors (drawing 2–3W). Waveguides direct light more efficiently (<20% loss), enabling 2000-nit images with 0.8W power draw—extending battery life from 2 to 6 hours in devices like Nreal Light.

Limitations and Design Challenges

For instance, even the most advanced commercial waveguides today, like those in Microsoft’s HoloLens 2, achieve an optical efficiency of only ~1-2%, meaning over 98% of light from the micro-display is lost before it reaches the eye. This massive loss necessitates the use of ultra-bright micro-displays consuming >500mW of power, creating a drain on battery-limited systems. Furthermore, manufacturing defects remain a critical cost driver; a single 150mm diameter glass waveguide substrate can cost 200−500 to produce, with yield rates for defect-free units often below 50% in high-volume production.

| Challenge Category | Key Metric / Parameter | Impact on Performance & Production |

|---|---|---|

| Optical Efficiency Loss | Total System Efficiency: 1-2% In-Coupling Loss: ~30% Out-Coupling Loss: ~40% Propagation Loss: ~0.1 dB/cm |

Requires micro-displays with >1,000,000 nits brightness, increasing power consumption and thermal load. |

| Manufacturing Complexity & Yield | Grating Feature Size: 300-500 nm Substrate Alignment Tolerance: < ±1 µm Production Yield: 40-60% Unit Cost (High-Volume): 50−100 |

Drives final product cost; a >60% yield loss is common due to nanometer-scale defects in grating structures. |

| Field of View (FoV) vs. Form Factor | FoV (Current): 50°-60° FoV (Theoretical Max with RGB): ~100° Waveguide Thickness: 1.5-2.0 mm Eyebox Size: 12mm x 8mm |

A 60° FoV requires a ~3x larger exit pupil and thicker substrates, conflicting with slim glasses design. |

| Image Quality Issues | MTF (Modulation Transfer Function) at 30 lp/deg: <0.3 Ghosting Artifacts: 5-10% stray light Color Uniformity Deviation: ΔE > 5 Angular Resolution Error: ±0.2° |

Causes blurring and color fringing; a ±0.2° error misaligns virtual objects by ~0.9 mm at 2m distance. |

| Environmental Sensitivity | Operating Temperature Range: -10°C to 50°C Thermal Expansion Coefficient: 8.5 µm/m·°C Humidity-Induced Swell: <0.01% @ 90% RH |

A 10°C change can shift optical alignment by ~8.5 µm, causing image misregistration and reducing MTF by ~15%. |

Achieving a 100° FoV—considered the minimum for full immersion—requires significantly larger in-coupling and out-coupling gratings. This forces the waveguide substrate to expand from a typical 1.5mm thickness to over 3.0mm, directly contradicting the goal of sleek, consumer-friendly glasses. Furthermore, a wider FoV distributes the same fixed amount of light over a larger retinal area, reducing overall luminance by ~40% for every 15° increase in FoV. This either demands a brighter projector, sucking more power, or results in a dimmer, less usable image. Even with brighter projectors, color uniformity suffers; achieving a consistent white point across a 60° FoV often results in a ΔE color difference > 5 (visible to the human eye) at the periphery compared to the center.

Creating the surface relief gratings (SRGs) that power most waveguides requires electron-beam lithography or nanoimprint lithography, processes with inherent variability. A grating groove depth that deviates by just ±10 nanometers from the target 200nm depth can alter the diffraction efficiency by ~15%, creating bright and dark spots in the image known as mura. This defect type can scrap ~25% of all production units.