Waveguides (e.g., WR-90 for 8.2-12.4GHz) outperform coaxial cables at high frequencies (>2GHz) with lower loss (0.1dB/m vs. 0.5dB/m), higher power handling (kW range), and better shielding. They enable precise microwave signal transmission in radar (e.g., X-band) and satellite systems by minimizing dispersion and EMI.

Table of Contents

What is a Microwave

Microwaves are a type of electromagnetic wave with frequencies ranging from 300 MHz to 300 GHz, sitting between radio waves and infrared on the spectrum. They’re widely used in communication, radar, and heating (like your kitchen microwave, which operates at 2.45 GHz). Unlike lower-frequency radio waves, microwaves have shorter wavelengths (1 mm to 1 m), allowing them to carry high-bandwidth data—essential for 5G networks (24-40 GHz), satellite communications (12-18 GHz), and Wi-Fi (5 GHz).

A key advantage of microwaves is their ability to focus energy efficiently. For example, a typical microwave oven converts ~70% of electrical power into heating, while radar systems can transmit pulses at 1-100 kW peak power for detecting objects kilometers away. In telecommunications, microwave links can achieve data rates up to 1 Gbps over 30-50 km distances, making them a cost-effective alternative to fiber optics in remote areas.

The power handling of microwaves depends on the medium—air, waveguides, or coaxial cables. Free-space transmission suffers from ~0.1 dB/km loss at 10 GHz, but obstacles like rain can increase attenuation by 5-10 dB/km. Meanwhile, waveguides (rectangular or circular metal tubes) reduce losses to ~0.01 dB/m, making them ideal for high-power applications (e.g., radar, industrial heating) where coaxial cables would overheat.

Microwave circuits rely on precise wavelength matching—a 1/4-wave transformer at 5 GHz is just 15 mm long, requiring tight manufacturing tolerances (±0.1 mm). Components like magnetrons (efficiency: ~65%) and GaN amplifiers (90% efficiency at 30 GHz) push performance limits. In radar systems, pulse repetition rates (100 Hz to 10 kHz) and duty cycles (0.1-10%) balance detection range and resolution.

Antenna Basics Explained

An antenna is a metal structure that converts electrical signals into radio waves (transmitting) or vice versa (receiving). The simplest antenna—a dipole—is just two conductive rods, each ¼ wavelength long. For FM radio (88-108 MHz), that means each rod is about 75 cm long, while a Wi-Fi antenna (2.4 GHz) shrinks to 3 cm per side. Antennas don’t create energy—they focus it directionally, with gains ranging from 2 dBi (omnidirectional) to 24 dBi (highly directional dishes).

Key rule: The larger the antenna relative to wavelength, the more focused the beam. A 1-meter parabolic dish at 10 GHz can achieve a beamwidth of just 3°, perfect for point-to-point links.

Antenna efficiency matters—cheap consumer models lose 30-50% of power as heat, while industrial-grade antennas keep losses under 10%. Impedance matching is critical: a 50-ohm mismatch can reflect 20% of power back, wasting energy. VSWR (Voltage Standing Wave Ratio) below 1.5:1 is ideal—beyond 2:1, performance drops sharply.

Polarization (vertical, horizontal, circular) affects real-world performance. A vertically polarized antenna works best for ground-level signals (e.g., walkie-talkies at 400 MHz), while circular polarization (used in GPS at 1.5 GHz) resists signal twisting. Mismatched polarization can cause 3-10 dB loss—equivalent to halving the transmit power.

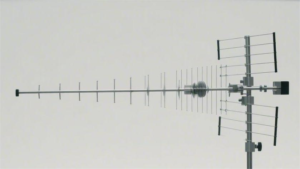

Frequency response determines bandwidth. A log-periodic antenna covers 100 MHz to 2 GHz with consistent 6 dBi gain, while a Yagi-Uda (e.g., TV antennas) trades bandwidth for 12-15 dBi gain in a narrow 50 MHz range. For 5G mmWave (28-39 GHz), phased arrays with 256 tiny antennas steer beams electronically at microsecond speeds.

Key Differences Compared

Microwaves and antennas are both essential in wireless communication, but they serve fundamentally different roles. Microwaves are electromagnetic waves (300 MHz–300 GHz), while antennas are physical devices that transmit or receive those waves. A 5G base station might use 24–40 GHz microwaves, but without a properly tuned phased-array antenna (with 64–256 elements), the signal won’t travel efficiently.

| Feature | Microwave | Antenna |

|---|---|---|

| Primary Role | Carries data/energy | Transmits/receives signals |

| Frequency Range | 300 MHz–300 GHz | Depends on design (e.g., 800 MHz–60 GHz) |

| Power Handling | Up to 100 kW (radar systems) | Limited by material (e.g., 500 W for a dipole) |

| Efficiency Loss | ~0.1 dB/km in air | ~0.5–3 dB due to impedance mismatch |

| Cost Factor | Generated by circuits (50–5,000) | Physical device (2–10,000) |

Wavelength determines antenna size. A 2.4 GHz Wi-Fi signal has a 12.5 cm wavelength, so its antenna elements are ~3 cm long. In contrast, a 900 MHz cellular antenna needs ~8 cm elements. Microwaves don’t “care” about size—but antennas must match their wavelength to work efficiently.

Directionality is another key difference. Microwaves propagate in straight lines (mostly), but antennas control beam shape. A parabolic dish (60 cm diameter at 10 GHz) focuses energy into a 5° beam, while an omnidirectional whip antenna radiates 360° with 2–5 dBi gain. If you use the wrong type, signal strength can drop by 10–20 dB—equivalent to losing 90% of your range.

Power handling varies drastically. A microwave waveguide can carry 10 kW at 30 GHz with <0.01 dB/m loss, but a coaxial cable at the same frequency overheats above 1 kW. Antennas face similar limits—a cheap PCB antenna burns out at 5 W, while a industrial horn antenna handles 500 W continuously.

Why Waveguides Matter

Waveguides are hollow metal pipes that guide microwaves with minimal loss, making them crucial for high-power and high-frequency applications. Unlike coaxial cables, which struggle above 18 GHz, waveguides efficiently carry signals from 1 GHz to 300 GHz with losses as low as 0.01 dB/m—critical for radar, satellite comms, and medical imaging.

| Feature | Waveguide | Coaxial Cable |

|---|---|---|

| Frequency Range | 1–300 GHz | DC–18 GHz |

| Power Handling | Up to 100 kW (pulsed) | Typically <1 kW |

| Loss at 10 GHz | 0.01–0.03 dB/m | 0.5–1 dB/m |

| Cost (per meter) | 50–500 | 5–50 |

| Lifetime | 20+ years (metal fatigue) | 5–10 years (dielectric decay) |

Size matters. A WR-90 waveguide (common for 8–12 GHz) has an inner dimension of 22.86 × 10.16 mm—exactly tuned to avoid signal degradation. Compare this to a coaxial cable at 10 GHz, where even a 0.1 mm imperfection can cause 10% reflection loss. Waveguides also handle peak powers better: a radar pulse at 50 kW would melt coaxial cables but propagates cleanly in a copper waveguide.

Efficiency is unmatched. In satellite ground stations, waveguides reduce feedline losses from 3 dB to <0.5 dB, saving ~50% transmit power. For 5G mmWave (28 GHz), waveguides with integrated antennas achieve beam steering accuracy of ±0.2°, versus ±1.5° for cable-fed systems.

Common Uses Today

Microwaves and antennas are everywhere in modern tech—from your smartphone’s 5G connection to airport radar scanning planes 300 km away. The global microwave technology market is worth $45 billion, growing at 7% annually, while antennas ship over 5 billion units per year for everything from IoT sensors to satellite communications.

1. Cellular Networks (4G/5G)

Your phone’s 4G antenna typically operates at 700-2600 MHz with 2-4 dBi gain, while 5G mmWave pushes into 24-40 GHz using phased arrays with 64-256 elements. A single 5G small cell covers 150-300 meters at 28 GHz, delivering 1-3 Gbps speeds—but needs 3-5x more antennas than 4G due to shorter range. Base stations use rectangular waveguide feeds to minimize loss below 0.5 dB across 30-meter tower runs.

2. Satellite Communications

Geostationary satellites at 36,000 km altitude rely on parabolic dish antennas (1-5m diameter) beaming 12-18 GHz microwaves. A typical VSAT terminal uses a 1.2m dish with 30 dBi gain, achieving 50 Mbps throughput despite 250ms latency. Waveguides here prevent 3-6 dB signal loss that would occur with coaxial cables over 10m+ runs in ground stations.

3. Radar Systems

Airport surveillance radar transmits 1 MW pulses at 2.8 GHz through waveguides capable of handling 100 kW average power. The return signal, often as weak as -120 dBm, gets captured by 4m-wide phased arrays with 0.1° beamwidth accuracy. Modern automotive radar at 77 GHz fits 4x4cm antenna arrays in your bumper, detecting objects 250m away with ±5cm range precision.

4. Medical Imaging

MRI machines use 128 MHz RF pulses (technically radio waves, but using waveguide principles) transmitted through copper-lined bore tubes to achieve 50 μm imaging resolution. The 1.5-3 Tesla magnets require perfect impedance matching—a 1% mismatch causes 10% image artifacts. Meanwhile, microwave ablation for cancer treatment delivers 50W at 2.45 GHz through needle antennas to destroy tumors with ±2mm targeting precision.

5. Consumer Devices

Your Wi-Fi 6 router uses 4-8 dipole antennas at 5.5 dBi gain each, pushing 1.2 Gbps through 80 MHz channels. Microwave ovens, the most common consumer waveguide application, focus 800W at 2.45 GHz into food with 70% energy efficiency—losing 30% to cavity reflections. Even RFID tags leverage 13.56 MHz antennas printed on 0.1mm foil, readable from 5m away in warehouse tracking systems.

The cost-performance tradeoffs dictate designs: 5G antennas cost 0.50−5 each in volume, while satellite feed horns run 200−2,000. But whether it’s saving 0.1 dB in a waveguide bend or squeezing 8 antennas into a smartphone, these technologies enable everything from global internet to life-saving medical tools.

Choosing the Right One

Selecting the right microwave and antenna system isn’t about finding the “best” option—it’s about matching technical specs to your budget, range, and environment. A 10,000 satellite antenna would be overkill for a 500m Wi-Fi link, just like using cheap PCB antennas would doom a 10km radar system. The global antenna market offers 5,000+ models across 20+ categories, with prices ranging from 0.10 for RFID tags to $50,000 for military-grade phased arrays.

| Factor | Microwave Consideration | Antenna Consideration |

|---|---|---|

| Frequency | 2.4 GHz (Wi-Fi) vs. 28 GHz (5G mmWave) | Must match λ/4 element size (3cm at 2.4 GHz) |

| Power | 5W (IoT) vs. 100kW (Radar) | Copper handles 500W; aluminum fails at 200W |

| Range | 50m (Bluetooth) vs. 50km (Microwave link) | High-gain (24dBi) dishes needed for >5km |

| Environment | Rain causes 5dB/km loss at 25GHz | Saltwater corrosion reduces lifespan by 60% |

| Budget | 50(SDR)vs.5k (Spectrum analyzer) | 20omnivs.2k directional antenna |

A sub-6GHz 5G network (3.5GHz) needs panel antennas with 16 dBi gain and ±45° beamwidth, while mmWave (28GHz) requires phased arrays of 256 micro-antennas on 5cm² PCBs. Get this wrong, and your signal strength drops 20dB—equivalent to 99% power loss. For reference:

- Wi-Fi 6 (5GHz): 3-5cm dipole antennas

- FM Radio (100MHz): 75cm whip antennas

- Satellite TV (12GHz): 60cm parabolic dishes

A 50W amateur radio rig needs antennas rated for 100W peaks (30% safety margin), while 4G base stations push 300W continuous through aluminum alloy radiators. Cheap PCB trace antennas burn out at 2W, but ceramic-loaded dipoles survive 50W at 90% efficiency.

In tropical climates, humidity increases VSWR by 15% annually, requiring stainless steel or gold-plated connectors. For offshore oil rigs, salt spray degrades aluminum antennas in 3-5 years versus 15+ years for titanium. Urban areas face multipath interference—solving it may require 4×4 MIMO antennas at 200/unit instead of 20 single-element models.