A microwave antenna transmits/receives RF signals (typically 1-300GHz) by converting electrical pulses to electromagnetic waves (Tx) or vice versa (Rx). For example, 5G mmWave antennas (24-47GHz) use patch radiators: feed lines inject 10-20dBm signals, exciting surface currents to radiate waves. With 15-20dBi gain and >80% efficiency, they focus beams (1-5° width) to boost signal strength in high-loss environments.

Table of Contents

What Are Microwaves?

Roughly 70% of global mobile data traffic relies on microwave links for backhaul (the connection between cell sites and core networks), according to a 2023 report by the Telecommunications Industry Association. Even the cosmic microwave background, leftover radiation from the Big Bang, floods space at 2.7 Kelvin (-270.45°C), a faint whisper of the universe’s birth. These waves aren’t just technical curiosities; they’re critical to modern communication and technology, operating at frequencies typically ranging from 1 gigahertz (GHz) to 300 GHz, with wavelengths shrinking from 30 centimeters down to 1 millimeter.

At their core, microwaves behave like all electromagnetic waves—they travel at the speed of light (~3×10⁸ meters per second in a vacuum) and carry energy. But their short wavelengths give them unique traits. For one, they don’t easily pass through metal; a sheet of aluminum just 0.1 millimeters thick can reflect 99.9% of microwave energy, which is why microwave ovens use metal walls to trap waves inside. They dopenetrate non-metallic materials like water, glass, or plastic, though—water molecules, in particular, absorb microwave energy efficiently.

A microwave oven’s magnetron generates 2.45 GHz waves (wavelength: ~12.2 cm), a frequency chosen because it matches the natural vibration rate of water molecules. At this frequency, water molecules jiggle 24.5 billion times per second, friction turns that motion into heat, and your food warms up. This is why dry foods (low water content) cook unevenly—less molecular vibration means less heat.A single 10 GHz microwave link can carry 10 gigabits per second (Gbps) of data, 100 times faster than a 100 MHz radio link over the same distance. That’s why telecom companies use microwave towers to connect remote areas: a single tower with a 6-foot dish can beam signals 50 kilometers with minimal signal loss (around 0.5 dB per km at 10 GHz).

A weather radar using 10 GHz waves can spot rainstorms up to 400 km away, resolving details as small as 100 meters.Microwaves even power precision technologies. Air traffic control radars use X-band microwaves (8–12 GHz) to track planes with 0.1-second updates, while medical microwave ablation uses 915 MHz waves to heat and destroy tumors, targeting tissue with ±2°C precision to avoid damaging healthy cells. Even your car’s collision-avoidance radar uses 77 GHz microwaves—so short they can detect a pedestrian 150 meters ahead in heavy rain.

Turning Electricity into Waves

In a typical 5G base station, the transmitter’s power amplifier consumes 60-70% of the system’s total energy to generate radio frequency (RF) signals. The efficiency of this conversion is critical; a high-power radar system might need to generate a 100-kilowatt pulse, but if the amplifier is only 50% efficient, it simultaneously produces 100 kilowatts of waste heat that must be managed.

- The Oscillator: Generates the precise, high-frequency electrical signal.

- The Modulator: Imprints data (voice, video, internet packets) onto the carrier wave.

- The Power Amplifier: Boosts the weak signal to a power level high enough for transmission over distance.

Modern systems use crystal oscillators made from a precisely cut slice of quartz to establish a stable reference frequency, often at a low value like 10 megahertz (MHz). This crystal vibrates with remarkable stability, drifting by less than ±2.5 parts per million (ppm) across a -40°C to +85°C temperature range. For microwave frequencies, this stable low frequency is then multiplied. A Phase-Locked Loop (PLL) circuit can take a 100 MHz input and synthesize a clean, stable output at 28 GHz, the frequency used for many 5G mmWave applications. The purity of this signal is measured as phase noise, typically below -110 dBc/Hz at a 100 kHz offset from the carrier frequency for a high-quality oscillator. Any noise here directly corrupts the transmitted data.

For a simple digital signal, this is done by shifting the wave’s phase. Quadrature Phase Shift Keying (QPSK) alters the phase in one of four distinct steps (0°, 90°, 180°, or 270°), allowing each symbol to represent 2 bits of data. More complex schemes like 256-QAM can pack 8 bits into each symbol, dramatically increasing data capacity but requiring a much cleaner signal with a Signal-to-Noise Ratio (SNR) exceeding 30 dB to be decodable. This modulated signal is still very weak, with a power level around 1 milliwatt (0 dBm).The final and most power-hungry stage is the power amplifier (PA). Its job is to boost the signal to 10, 100, or even 1000 watts for long-distance transmission. The efficiency of this amplification is paramount. Traditional Gallium Arsenide (GaAs) amplifiers might achieve 15-20% power-added efficiency (PAE), meaning for every 100 watts of DC power drawn, only 20 watts become RF energy, and 80 watts are dissipated as heat.

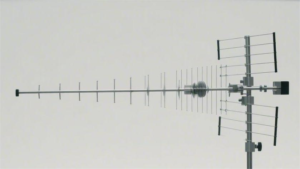

Shaping and Aiming the Signal

An unguided radio frequency (RF) signal emitted from a simple wire would spread out in all directions, wasting over 99.9% of its power and causing interference with other devices. Effective microwave communication requires precisely shaping the energy into a focused beam, much like a flashlight concentrates light. This beamforming is quantified as antenna gain, which can amplify the effective signal power in a specific direction by a factor of 100 to 10,000 times (20 to 40 dBi). For a point-to-point microwave link spanning 15 kilometers, using a 2-foot diameter dish with a gain of 38 dBi can make the signal appear 6300 times stronger in the intended direction compared to an omnidirectional antenna. The key components responsible for this spatial control are:

- The Feed Horn: Launches the signal toward the reflector.

- The Reflector (Dish): Focuses the diverging waves into a parallel beam.

- The Radome: A protective cover that minimizes signal loss.

A standard circular feed horn for a 10 GHz system might have an aperture diameter of approximately 5.8 centimeters, optimized to match the wavelength of 3 centimeters. The geometry of the flare determines the illumination efficiency; if the horn illuminates the entire dish evenly, efficiency can reach 70-80%, but if the signal spills over the edges, efficiency can drop below 50%. The horn must also ensure the correct polarization—whether the electromagnetic wave oscillates horizontally or vertically. Maintaining consistent polarization is critical; a 3 dB loss (a 50% power reduction) occurs if the receiving antenna’s polarization is misaligned by 45 degrees from the transmitter’s.

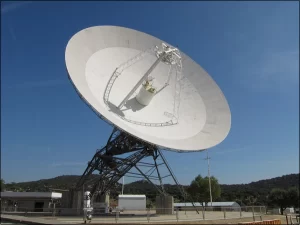

The primary function of the parabolic dish is to convert a spherical wavefront from the feed into a planar wavefront for transmission. This collimation is what creates the narrow beam.

Gain (in dBi) = 10 * log10(η * (π * D / λ)²), where D is the diameter, λ is the wavelength, and η is the aperture efficiency (typically 55-65%). This means a 6-foot (1.8-meter) dish operating at 6 GHz (wavelength = 5 cm) will have a gain of approximately 37 dBi. The beamwidth—the angular width of the main signal lobe—is inversely related to the dish size. The same 6-foot dish at 6 GHz produces a beamwidth of about 2.5 degrees. Hitting a target 30 km away, this beam will spread to a spot diameter of roughly 1.3 km.

For higher frequencies, the beam is tighter; a 30 GHz link on a similar dish has a beamwidth of just 0.5 degrees, requiring exquisitely precise alignment with an error of less than 0.25 degrees to avoid a 3 dB signal drop.Finally, the entire assembly is often protected by a radome, a cover made from material like fiberglass or PTFE that is transparent to radio waves. A well-designed radome introduces a signal loss of less than 0.2 dB. However, if rain, snow, or ice accumulates on it, the loss can increase significantly—wet snow can cause an additional 5 dB of attenuation. For a link with a 20 dB fade margin, this alone could cause an outage.

How a Dish Focuses the Signal

A common f/D ratio for satellite dishes is 0.6. This means a 60-centimeter diameter dish would have a focal length of 36 centimeters. The depth of the parabola is calculated by the formula Depth = D² / (16 * f). For our 60 cm dish with an f/D of 0.6, the depth is a shallow (60²) / (16 * 36) = 6.25 cm. This shallow curve is crucial for achieving a high aperture efficiency, typically between 55% and 75% in well-designed systems.

The surface accuracy of the dish is equally critical; for a microwave signal at 12 GHz (wavelength 2.5 cm), a surface deformation (dent or warp) of just 3 mm (approximately λ/8) can cause a 1 dB reduction in gain due to phase errors in the reflected wave, effectively wasting 20% of the transmitted power.The process of focusing begins at the feed horn. The horn does not emit a perfectly parallel wave; it produces a spherical wavefront that diverges. When this expanding wavefront hits the parabolic surface, each point on the wave is reflected.

The geometry of the parabola ensures that the path length from the focal point to the dish and then to a plane perpendicular to the axis is constant. This constant path length is the magic. It means that all parts of the wave, after reflection, are in phase when they leave the dish. This in-phase condition transforms the diverging spherical wave into a planar wavefront—a collection of parallel rays. This collimation is what creates the narrow beam. The beamwidth (the angle of the main lobe) is approximately equal to 70 * (λ / D) degrees, where λ is the wavelength and D is the diameter. A 2.4-meter dish operating at the Ka-band (30 GHz, λ=1 cm) has an incredibly narrow beamwidth of about 0.29 degrees. To put that in perspective, aiming this antenna at a target 10 km away is like using a laser pointer; the beam would be only about 50 meters wide at that distance.The relationship between the dish’s physical characteristics and its performance is direct and quantifiable.

| Parameter | Impact on Performance | Example Values & Quantified Effect |

| Diameter (D) | Gain increases with the square of the diameter. Doubling the diameter quadruples the gain (+6 dB). | A 1m dish at 12 GHz has ~40 dBi gain. A 2m dish at same frequency has ~46 dBi gain. |

| Surface Accuracy | Measured as RMS (Root Mean Square) error. Gain loss ≈ -25 * (ε/λ)² in dB, where ε is RMS error. | For 30 GHz (λ=1cm), a 1.5mm RMS error causes a 1.4 dB gain loss. |

| f/D Ratio | Optimizes illumination efficiency. Too low (~0.3): spillover loss. Too high (~0.8): under-illumination. | An f/D of 0.4 might yield 50% efficiency. An optimal f/D of 0.6 can achieve 70% efficiency. |

| Frequency (f) | For a fixed diameter, gain increases with the square of the frequency. Higher frequency = narrower beam. | A 1m dish has 36 dBi gain at 6 GHz, but 48 dBi gain at 24 GHz—a 12 dB (16x) increase. |

A 5 mm misalignment in the feed’s position can easily degrade gain by 0.5 dB. For a satellite TV reception system using a 80 cm offset-feed dish (common for Direct-to-Home services), this precision allows it to collect enough signal from a geostationary satellite 36,000 km away to achieve a Carrier-to-Noise (C/N) ratio exceeding 15 dB, which is sufficient for clear high-definition video decoding with a Bit Error Rate (BER) better than 10⁻¹¹.

The Receiver’s Job

A signal arriving at a satellite dish from a probe in deep space, for instance, can be as weak as 0.0000000000000001 watts (-160 dBm). The primary figure of merit for a receiver is its noise figure, which quantifies how much internal electronic noise it adds to the already weak signal. A state-of-the-art Low-Noise Block Downconverter (LNB) on a satellite TV dish might have a noise figure of 0.7 dB, meaning it degrades the signal-to-noise ratio by only about 17%.

The receiver’s performance directly determines the achievable data rate; according to the Shannon-Hartley theorem, a channel with a 10 MHz bandwidth and a 20 dB signal-to-noise ratio has a maximum theoretical data capacity of approximately 66 Mbps.The first component the incoming signal encounters is the Low-Noise Amplifier. Its job is to boost the signal’s amplitude by a factor of 100 to 1000 times (20 to 30 dB) before any significant degradation occurs. This initial amplification is critical because any noise added at this stage is amplified by all subsequent stages. A high-quality LNA for a 5G base station might have a gain of 30 dB and a noise figure of 1.2 dB, meaning if the input signal is -90 dBm, the output would be around -60 dBm.

A bandpass filter with a 40 MHz bandwidth centered at 28.5 GHz will reject out-of-band interference, such as a nearby radar transmission at 24 GHz, by as much as 40 dB, attenuating the interfering signal by a factor of 10,000.The next stage is the downconversion process. It is often impractical and expensive to process signals directly at their multi-gigahertz carrier frequencies. A mixer circuit is used to translate the high-frequency signal down to a lower, more manageable Intermediate Frequency. For example, a satellite LNB receives a block of frequencies between 10.7 and 12.75 GHz. It uses a local oscillator generating a fixed frequency of 9.75 GHz to mix the signal down to an IF band between 950 MHz and 3 GHz. This process must be precise; a local oscillator frequency drift of just 0.001% (100 kHz at 10 GHz) can corrupt the data.

The mixer itself introduces some noise and signal loss, with a conversion loss typically around 6 dB, effectively cutting the signal power in half. After downconversion, the IF signal undergoes further amplification by an IF amplifier with a controllable gain of 50 to 70 dB, which is automatically adjusted by an Automatic Gain Control circuit to maintain a constant level, compensating for signal fades of up to 30 dB caused by rain or atmospheric conditions.Finally, the signal reaches the demodulator, the most complex part of a modern digital receiver. Its first task is carrier recovery, locking onto the exact frequency and phase of the incoming signal with an accuracy within 0.01% of the symbol rate.

For a 100 Megabaud signal, the phase error must be less than 1 degree. Then, the timing recovery circuit samples the signal at the precise center of each symbol, with a timing jitter of less than 2% of the symbol period to minimize errors. The demodulator then decodes the modulation, such as 256-QAM, which packs 8 bits per symbol. It must accurately distinguish between 256 different phase and amplitude states, even with a Signal-to-Noise Ratio of just 30 dB.

Common Uses in Daily Life

An estimated over 60% of all global mobile data traffic is carried by microwave links for mobile backhaul, connecting cell towers to the core network. In a typical urban area, a single carrier might deploy thousands of point-to-point microwave links, each operating at frequencies like 18 GHz, 23 GHz, or 38 GHz, with capacities ranging from 150 Mbps to 2 Gbps per link. These systems form a wireless fiber network atop city buildings, with antenna dishes typically 0.3 to 1.2 meters in diameter, transmitting data over distances of 3 to 15 kilometers with an availability of 99.999% (about 5 minutes of downtime per year). The following table outlines several key applications:

| Application | Typical Frequency Bands | Key Parameters & Real-World Data |

| Mobile Network Backhaul | 6 GHz – 42 GHz | Capacity: 500 Mbps – 10 Gbps; Link Distance: 3-25 km; Antenna Size: 0.6m – 1.2m dish |

| Satellite Television (DTH) | Ku-band (10.7-12.75 GHz) | Dish Size: 60-90 cm; Signal Strength from Satellite: ≈ -110 dBm; Data Rate: ~40 Mbps per transponder |

| Automotive Radar (ADAS) | 24 GHz (Short-Range), 77 GHz (Long-Range) | Range: 1-150 m; Accuracy: ±0.1 m; Angular Resolution: < 2° at 77 GHz |

| Wi-Fi (5 GHz band) | 5.15-5.85 GHz | Output Power: < 1 Watt; Range: ~30 m indoors; Data Rate: Up to 1.3 Gbps (Wi-Fi 5) |

| RFID & Access Control | 2.45 GHz, 5.8 GHz | Read Range: 1-10 m; Transaction Time: < 100 ms |

A microwave link operating in the 23 GHz band can provide a full 1 Gbps Ethernet connection with a latency of less than 0.5 milliseconds. The antennas for these links are typically 1-foot or 2-foot dishes mounted on poles, aligned with a precision of less than 1 degree. For longer hops, such as connecting across a bay or valley, systems use lower frequencies like 6 GHz with larger 4-foot dishes to achieve paths of 40 kilometers or more.

The reliability of these links is exceptional, with modern systems featuring adaptive modulation that can dynamically reduce the data rate from 1024-QAM to QPSK during heavy rain (which attenuates the signal at 10 dB/km at 40 GHz) to maintain the connection, rather than dropping it entirely.In our homes, the most direct interaction is with satellite TV dishes. A standard offset-feed parabolic dish with a 75 cm diameter is precisely aimed at a geostationary satellite 35,786 km away. The dish focuses the extremely weak signal onto an LNB (Low-Noise Block Downconverter), which is cooled to an effective noise temperature of about 100 Kelvin to maximize sensitivity. The system receives a signal power level of approximately -110 dBm (about 0.0000000000001 watts), which the receiver amplifies and decodes into hundreds of television channels. Modern cars are also equipped with microwave antennas, often embedded in the bumper or grille.