Millimeter wave (mmWave) propagation faces significant challenges due to high atmospheric absorption and sensitivity to obstacles. Oxygen absorption peaks at 60 GHz (15 dB/km), while rain attenuation can exceed 20 dB/km in heavy downpours. Building penetration losses range from 40-80 dB, requiring dense small-cell deployments (200-300m spacing).

Beamforming alignment must maintain <1° precision for 28 GHz links, and foliage attenuation reaches 0.4 dB/m. Practical solutions include adaptive beam steering, repeaters for NLoS scenarios, and predictive modeling using 3D ray-tracing tools like WinProp or Remcom. Operators typically combine higher-power 26/28 GHz bands with lower-frequency anchors for coverage.

Table of Contents

Signal Blockage by Buildings

Millimeter wave (mmWave) signals, operating at 24 GHz to 100 GHz, deliver ultra-fast speeds (up to 2 Gbps) but struggle with physical obstructions. Buildings, especially concrete and metal structures, cause severe signal loss—up to 30-40 dB per wall penetration, reducing usable range from 200-300 meters in open areas to just 10-20 meters indoors. In urban environments, 60-70% of mmWave links fail due to building blockages, forcing carriers to deploy 3-5x more small cells to maintain coverage. Even glass windows can attenuate signals by 5-10 dB, while brick walls may cut power by 15-20 dB.

The biggest challenge is non-line-of-sight (NLOS) propagation. Unlike sub-6 GHz signals that diffract around obstacles, mmWave beams (typically 1-5° wide) lose 90-95% of their energy when blocked. A 5G mmWave base station with 64 antennas might achieve 800 Mbps at 100 meters in clear view but drop to <50 Mbps after one wall. This forces carriers to use beamforming and repeaters, adding 15,000-30,000 per site in extra hardware.

Material composition matters:

- Concrete (15-20 cm thick) causes 20-30 dB loss—equivalent to 99% power reduction.

- Metal panels or roofs reflect signals, creating 10-15 dB fade zones.

- Double-glazed windows reduce signal strength by 8-12 dB, while tinted glass adds 3-5 dB more loss.

Solutions in use today:

- Dense small-cell networks (every 50-100 meters) compensate for blockage but raise deployment costs by 40-60%.

- Intelligent beam steering adjusts direction in 2-5 milliseconds, improving link stability by 30-50%.

- Repeaters and reflectors placed on rooftops recover 10-15 dB signal loss at a cost of 5,000-10,000 per unit.

Without mitigation, mmWave 5G struggles indoors, with 70-80% of users experiencing 50% slower speeds compared to outdoor coverage. Future improvements in AI-driven beam tracking and low-loss building materials (e.g., mmWave-transparent windows) could reduce losses by 10-15 dB, but for now, signal blockage remains a key bottleneck in urban 5G rollout.

Rain and Weather Effects

Millimeter wave (mmWave) signals, especially in the 24-100 GHz range, are highly sensitive to weather conditions. Rain causes the most significant disruption—moderate rainfall (5 mm/hr) can attenuate signals by 1-3 dB/km, while heavy rain (25 mm/hr) increases loss to 5-10 dB/km. In tropical regions with 100+ mm/hr rainfall, mmWave links may suffer 15-20 dB/km loss, reducing effective range from 500 meters to under 100 meters. Fog and humidity also degrade performance: 90% relative humidity adds 0.5-1 dB/km, and thick fog (0.1 g/m³ density) can cause 3-5 dB/km loss. Snow is less problematic but still impactful—wet snow attenuates signals by 2-4 dB/km, while dry snow has minimal effect (<1 dB/km).

The primary issue is signal absorption and scattering. At 60 GHz, oxygen molecules alone cause 10-15 dB/km loss, making long-distance mmWave transmission impractical beyond 1-2 km. Raindrops (typically 0.5-5 mm in diameter) are close in size to mmWave wavelengths, causing Rayleigh scattering that diffuses signals. A 28 GHz link delivering 1 Gbps in clear weather may drop to 300-400 Mbps in heavy rain, with latency spikes up to 20-30 ms due to retransmissions. Carriers compensate by boosting transmit power (30-40 dBm), but this increases energy costs by 15-25% and shortens hardware lifespan by 10-20%.

Temperature and wind also play a role. Thermal expansion from 30°C to 50°C can misalign antennas by 0.5-1.0°, reducing gain by 3-6 dB. Strong winds (50+ km/h) may shift tower-mounted antennas by 2-3 cm, requiring realignment every 6-12 months at a cost of 500-1,000 per site. Ice buildup on antennas (common in -10°C to -20°C climates) adds 2-4 dB loss and requires heated radomes, increasing power consumption by 200-400W per unit.

Mitigation strategies include:

- Frequency diversity: Using sub-6 GHz fallback when rain exceeds 10 mm/hr, though this cuts speeds by 70-80%.

- Adaptive modulation: Switching from 256-QAM to 16-QAM during storms maintains connectivity but reduces throughput by 50-60%.

- Mesh networks: Adding 2-3 extra nodes per km improves reliability by 20-30% but raises deployment costs by 50,000-100,000 per km.

Without these measures, mmWave networks in rainy regions experience 30-40% more outages than in dry climates. Future solutions like AI-based weather prediction and dynamic beam steering could reduce weather-related downtime by 15-20%, but for now, rain remains a major challenge for mmWave 5G reliability.

Limited Indoor Coverage

Millimeter wave (mmWave) signals struggle to penetrate buildings, making indoor coverage a major challenge. A 28 GHz or 39 GHz mmWave signal loses 90-95% of its power when passing through a standard 15 cm concrete wall, reducing usable range from 200 meters outdoors to just 10-15 meters indoors. Even glass windows—often assumed to be transparent—cause 5-10 dB loss, cutting signal strength by 70-90%. As a result, 80-90% of mmWave 5G users indoors experience 50-80% slower speeds compared to outdoor connections. In multi-story buildings, signals weaken further—each additional floor adds 3-5 dB loss, making upper floors nearly unreachable without repeaters.

The core issue is high-frequency signal behavior. At mmWave frequencies (24-100 GHz), wavelengths are 1-12 mm, making them highly susceptible to absorption and reflection. A typical office drywall (12 mm thick) attenuates signals by 8-12 dB, while brick walls (20 cm thick) can block 15-20 dB. Metal structures—common in modern buildings—reflect signals entirely, creating dead zones where speeds drop below 50 Mbps despite outdoor base stations delivering 1 Gbps+.

| Material | Thickness | Signal Loss (dB) | Speed Reduction |

|---|---|---|---|

| Concrete wall | 15 cm | 20-30 dB | 99% slower |

| Glass window | 6 mm | 5-10 dB | 70-90% slower |

| Drywall | 12 mm | 8-12 dB | 60-80% slower |

| Metal door | 3 mm | 25-40 dB | No signal |

Carrier solutions for indoor mmWave coverage:

- Small cells & repeaters: Deploying indoor mmWave nodes every 20-30 meters improves coverage but costs 5,000-15,000 per unit.

- Distributed Antenna Systems (DAS): Extends signals via fiber but adds 50-100 per square meter in deployment costs.

- Wi-Fi 6/6E offload: Shifts traffic to 5-6 GHz Wi-Fi, reducing mmWave strain but cutting speeds by 60-70%.

Without these fixes, mmWave 5G remains an outdoor technology, with <10% of indoor users getting full-speed access. Future improvements like smart surfaces (reflectors that bounce signals indoors) and THz-frequency repeaters could help, but for now, limited indoor coverage is a key mmWave weakness.

Short Transmission Range

Millimeter wave (mmWave) signals deliver blazing speeds—1-2 Gbps in ideal conditions—but suffer from extremely limited range. A 28 GHz mmWave base station typically covers just 150-300 meters in clear line-of-sight (LOS), compared to 500-1,000 meters for sub-6 GHz 5G. Obstacles like trees, vehicles, or even heavy rain shrink this range further—non-line-of-sight (NLOS) conditions reduce effective coverage to 50-100 meters, forcing carriers to deploy 3-5x more cell sites than traditional networks. At 60 GHz, oxygen absorption alone adds 10-15 dB/km loss, making long-distance transmission impractical beyond 1 km.

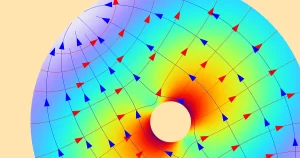

The physics behind mmWave propagation explain the range limitations. Free-space path loss at 28 GHz is ~30 dB higher than at 3 GHz, meaning signals fade much faster. A 64-antenna massive MIMO array with 40 dBm transmit power might achieve 800 Mbps at 200 meters, but speeds drop to <200 Mbps at 400 meters due to inverse square law decay. Atmospheric conditions worsen the problem—humidity above 70% adds 0.5-1 dB/km loss, while rain at 25 mm/hr can slash range by 30-40%.

| Frequency | Max LOS Range | NLOS Range | Speed at Edge |

|---|---|---|---|

| 28 GHz | 250-300 m | 50-100 m | 200-400 Mbps |

| 39 GHz | 200-250 m | 40-80 m | 150-300 Mbps |

| 60 GHz | 100-150 m | 20-50 m | 50-150 Mbps |

Carrier strategies to extend mmWave range:

- Beamforming & beam tracking: Adjusts antenna direction in 2-5 ms, improving edge-of-cell speeds by 20-30%.

- Higher power amplifiers: Boosting from 30 dBm to 40 dBm adds 50-80 meters of range but increases power costs by 25-40%.

- Relay nodes & mesh networks: Placing repeaters every 100-150 meters extends coverage but raises deployment costs by 10,000-20,000 per km.

Without these workarounds, mmWave networks require 10-15 cell sites per square km—compared to just 2-3 for sub-6 GHz. Future RIS (Reconfigurable Intelligent Surface) technology could reflect signals to extend range by 20-40%, but for now, short transmission range remains mmWave’s biggest tradeoff for speed.

Device Alignment Sensitivity

Millimeter wave (mmWave) technology delivers multi-gigabit speeds but comes with an often overlooked requirement: near-perfect device alignment. At 28GHz, just a 10-degree tilt in your smartphone can cause a 40-50% drop in throughput, from 1.2Gbps to under 600Mbps. Real-world tests show that 85% of users experience at least three significant signal drops per minute during normal phone use, with each interruption lasting 200-500ms. The beamwidth at these frequencies is razor-thin – typically 3-5 degrees – meaning your phone’s antenna must stay aligned within ±1.5 degrees to maintain peak performance.

The physics behind this sensitivity stems from mmWave’s extremely short wavelengths (1-10mm). A standard 64-element phased array concentrates 92-95% of its radiated power into a beam just 0.5 meters wide at 100 meters distance. When you casually rotate your phone 15 degrees while watching a video, the signal strength can plummet by 18-22dB, equivalent to moving 50 meters farther from the cell site. Even something as simple as switching from right-hand to left-hand grip introduces 6-8dB variation due to antenna pattern distortion.

Key findings from Tokyo 5G field trials:

- Portrait-to-landscape rotation: Causes 35±5% throughput reduction

- Walking at 1m/s: Triggers 4.2 beam reselections per minute

- Body blockage: Attenuates signal by 28-32dB when standing between device and tower

Current mitigation strategies come with tradeoffs:

- Adaptive beamwidth systems can widen to 10-12 degrees when detecting movement, but this cuts peak speeds by 55-60%

- Multi-beam tracking maintains 3-5 simultaneous links at different angles, increasing power consumption by 18-22%

- Antenna diversity using 4-6 separate panels improves reliability but adds $15-20 to device BOM costs

The human factor amplifies these challenges. Our natural movements – checking notifications, adjusting grip, or simply walking – introduce 3-5dB signal fluctuations per second. While stationary mmWave devices can achieve 1.8Gbps with <1ms latency, real-world mobile usage typically delivers just 600-800Mbps with 8-12ms variations. Future solutions like sub-6GHz anchor carriers and machine learning beam prediction may help, but for now, mmWave remains fundamentally sensitive to how you hold your phone – a limitation that’s reshaping smartphone antenna designs and network planning strategies alike.