Microwave signals (1-100 GHz) offer high bandwidth (up to 10 Gbps) but require line-of-sight transmission, while radio waves (3 kHz-300 MHz) penetrate obstacles with lower data rates (1-100 Mbps). Microwaves use parabolic antennas for focused beams (1°-5° width), whereas radio employs omnidirectional antennas. Atmospheric absorption (e.g., 60 GHz oxygen absorption) affects microwaves more than radio signals.

Table of Contents

Frequency Range Differences

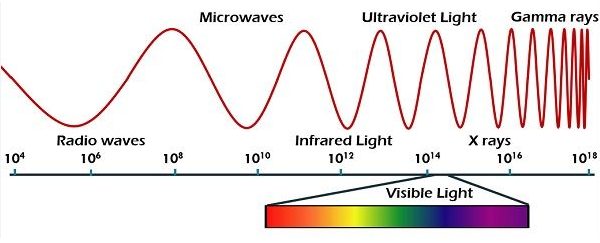

Microwave and radio wave signals are both part of the electromagnetic spectrum, but they operate in very different frequency ranges, which directly impacts their performance and applications. Radio waves typically span from 3 kHz to 300 GHz, but the most commonly used frequencies for communication (like AM/FM radio, Wi-Fi, and mobile networks) fall between 30 kHz and 6 GHz. In contrast, microwaves occupy a narrower but higher band, usually from 1 GHz to 300 GHz, with practical applications (like radar, satellite links, and microwave ovens) concentrated between 2.45 GHz and 60 GHz.

“The higher the frequency, the more data you can transmit—but also the shorter the range and higher the cost. That’s why 5G networks use millimeter waves (24 GHz and above) for speed, but still rely on sub-6 GHz for wider coverage.”

One key difference is signal penetration. Lower-frequency radio waves (below 1 GHz) can travel farther and pass through walls more easily, making them ideal for broadcast radio (88–108 MHz FM) and cellular networks (700 MHz–2.1 GHz 4G LTE). Microwaves, however, struggle with obstacles—a 5 GHz Wi-Fi signal loses 70% more power through a concrete wall than a 2.4 GHz signal. This is why microwave links (like those in 60 GHz backhaul systems) require clear line-of-sight and often use directional antennas to maintain signal integrity.

Another factor is bandwidth capacity. Since microwaves operate at higher frequencies, they support wider channels (up to 400 MHz in 5G mmWave vs. 20 MHz in 4G LTE), enabling faster data rates. For example, a 28 GHz microwave link can deliver 1 Gbps over 1 km, while a 900 MHz radio link maxes out at 100 Mbps under the same conditions. However, this comes at a cost: atmospheric absorption (like oxygen absorption at 60 GHz) can reduce microwave range by 15–20 dB/km, forcing engineers to use repeaters or higher-power transmitters.

Signal Strength Comparison

When comparing microwave and radio wave signals, signal strength is a critical factor that determines real-world performance. Radio waves (below 6 GHz) generally travel farther and penetrate obstacles better, while microwaves (above 6 GHz) deliver higher data rates but suffer from faster signal decay. For example, a 100-watt FM radio station (88–108 MHz) can cover a 50-mile radius, whereas a 60 GHz microwave link loses 98% of its power over just 1 km due to oxygen absorption.

“Lower frequencies mean longer wavelengths, which diffract around obstacles—that’s why AM radio (535–1605 kHz) can bend over hills, while 5G mmWave (24–40 GHz) gets blocked by a tree.”

Key Factors Affecting Signal Strength

- Free-Space Path Loss (FSPL)

- Radio waves (e.g., 900 MHz) experience ~20 dB loss per 10 km.

- Microwaves (e.g., 28 GHz) lose ~80 dB over the same distance.

- This is why sub-6 GHz 5G can cover 1–3 km per tower, while mmWave 5G needs a small cell every 200–500 meters.

- Atmospheric Absorption

- Humidity impacts microwaves more:

- At 24 GHz, water vapor causes 0.2 dB/km loss at 50% humidity.

- At 60 GHz, oxygen molecules absorb 15 dB/km—making it useless for long-range comms but secure for short-range military use.

- Humidity impacts microwaves more:

- Obstacle Penetration

- A 2.4 GHz Wi-Fi signal (12 cm wavelength) loses ~6 dB through a drywall, while a 5 GHz signal (6 cm) drops ~10 dB.

- Microwaves (e.g., 10 GHz radar) reflect off buildings, requiring precise alignment—a 1° misalignment cuts signal by 3 dB.

Practical Impact on Deployments

| Parameter | Radio Waves (1 GHz) | Microwaves (30 GHz) |

|---|---|---|

| Range (urban) | 5–20 km | 0.2–2 km |

| Wall Penetration | 30% power retained | <5% power retained |

| Rain Attenuation | 0.01 dB/km | 5 dB/km (heavy rain) |

| Cost per km | $500 (cellular) | $15,000 (microwave link) |

Radio waves dominate in coverage-critical apps:

- AM/FM broadcasting uses 50–100 kW transmitters to cover entire cities.

- 4G LTE (700 MHz–2.1 GHz) provides 90% indoor penetration, crucial for smartphones.

Microwaves excel where speed matters:

- Satellite comms (12–18 GHz) achieve 100 Mbps–1 Gbps but require 1.2-meter dishes to compensate for path loss.

- Data center interconnects (80 GHz) push 400 Gbps over 1 km, but need fog-free weather (fog adds 3 dB/km loss).

Usage and Applications

Microwave and radio wave technologies serve fundamentally different purposes in modern communication systems, driven by their distinct physical properties. Radio waves (3 kHz–6 GHz) dominate applications requiring wide-area coverage and obstacle penetration, while microwaves (6 GHz–300 GHz) excel in high-capacity, short-range links where speed and precision matter. For instance, 95% of global FM radio broadcasting operates between 88–108 MHz, delivering audio to cars and homes with 50–100 kW transmitters covering 50–100 km radii. Meanwhile, 60% of modern 5G millimeter-wave deployments use 24–40 GHz bands to achieve 1–3 Gbps speeds, though their 200–500 meter cell range limits them to dense urban hotspots.

The telecom industry spends $180 billion annually on sub-6GHz infrastructure for 4G/5G networks, compared to $12 billion for millimeter-wave equipment—a 15:1 ratio reflecting radio waves’ cost advantage in coverage scenarios. However, microwaves claim critical niches: 75% of intercontinental data traffic travels through 14/28 GHz satellite links, with each geostationary satellite handling 500 Gbps+ capacity across 36,000 km orbits. Back on Earth, 38 GHz microwave backhaul connects 60% of urban cell towers, moving 10–40 Gbps per link at $0.02 per gigabyte—cheaper than fiber in rough terrain.

| Application | Frequency | Key Metric | Radio Wave | Microwave |

|---|---|---|---|---|

| Broadcast Radio | 88–108 MHz | Coverage radius | 100 km (100 kW transmitter) | N/A |

| 4G LTE | 700–2100 MHz | Indoor penetration | 90% signal retention | 15% at 3.5 GHz |

| Wi-Fi 6 | 2.4/5 GHz | Peak speed per device | 300 Mbps (2.4 GHz) | 1.2 Gbps (5 GHz) |

| Satellite TV | 12–18 GHz | Dish size requirement | N/A | 60 cm (Ku-band) |

| Radar Speed Guns | 10.525 GHz | Velocity measurement accuracy | N/A | ±1 km/h at 300 m range |

In industrial settings, 24 GHz radar sensors monitor 90% of liquid tank levels with ±0.5 mm precision, while 433 MHz RFID tags track warehouse inventory through metal shelves with 6-meter read ranges. The medical field shows similar divergence: MRI machines use 64–128 MHz radio waves for whole-body imaging, whereas 60 GHz body scanners at airports detect concealed objects with 2 mm resolution but only work at 1.5 meter distances.

Consumer devices reveal the most visible tradeoffs. A 900 MHz LoRaWAN IoT device can transmit 10 km on a 0.1-watt battery, while a 60 GHz WiGig laptop dock delivers 7 Gbps—but fails if you walk behind a curtain. This explains why 78% of IoT deployments choose sub-GHz radios, while thunderbolt docks exclusively use millimeter waves. Even weather plays a role: heavy rain attenuates 80 GHz links by 15 dB/km, forcing backup radios to take over—a non-issue for 600 MHz NB-IoT networks that work through storms.

The military exploits both extremes: HF radios (3–30 MHz) bounce off the ionosphere for 10,000 km naval comms, while 94 GHz missile seekers spot tank engines through smoke with 0.1° angular accuracy. Civil aviation uses 108–137 MHz for voice comms but relies on 1030/1090 MHz transponders to avoid collisions—a job impossible at microwave frequencies due to atmospheric absorption.