Satellite signal blockage can occur due to heavy rain (attenuation >10 dB at 30 GHz), physical obstructions (buildings/trees blocking 5-20° elevation angles), solar interference (occurring near equinoxes for ~10 minutes daily), incorrect dish alignment (even 1° error causes 30% signal loss), or interference from terrestrial sources (e.g., 5G networks at 3.7-4.2 GHz). Regular alignment checks using a signal meter and ensuring 60cm+ clearance around the dish can mitigate most issues.

Table of Contents

Bad Weather Effects

Satellite signals can drop or weaken during bad weather, especially heavy rain, snow, or thick clouds. Research shows that rain fade—signal loss due to rain—can reduce signal strength by 30-50% in severe storms. For example, a downpour with 25 mm/hr rainfall can cut Ku-band (12-18 GHz) satellite signals by 6-8 dB, enough to disrupt TV or internet service. Snow buildup on dishes also causes issues—just 2 cm of wet snow can block 20-30% of signal reception, while ice layers of 3-5 mm may reflect signals away entirely. Even dense cloud cover (like cumulonimbus clouds) can absorb 1-3 dB of signal power, enough to cause pixelation or buffering.

The problem gets worse with higher frequencies. Ka-band (26-40 GHz) signals are 3x more sensitive to rain fade than C-band (4-8 GHz). A mild drizzle might not affect older C-band dishes, but it can knock out 50 Mbps Ka-band internet connections. Humidity also plays a role—90%+ humidity increases atmospheric absorption, reducing signal clarity by 1-2 dB even without rain. Wind doesn’t block signals directly, but gusts over 50 km/h can misalign dishes by 0.5-2 degrees, enough to lose lock on geostationary satellites.

Mitigation methods exist, but they cost money. Uplink stations sometimes boost power by 3-10 dB during storms, but this shortens amplifier lifespan by 15-20%. Larger dishes (1.2m+ vs. standard 60cm) help by increasing gain, but they add 100−300 to installation costs. Some systems use adaptive coding and modulation (ACM), which adjusts signal parameters in real-time, improving reliability by 20−40% while adding 50-150 to equipment expenses.

If you’re in a high-rainfall area (like tropical or coastal regions), expect 10-30 days/year of weather-related signal issues. The best fix is choosing a lower frequency (C-band if available) or a provider with automatic power compensation. Otherwise, even a 15-minute summer storm can knock out your connection until the weather clears.

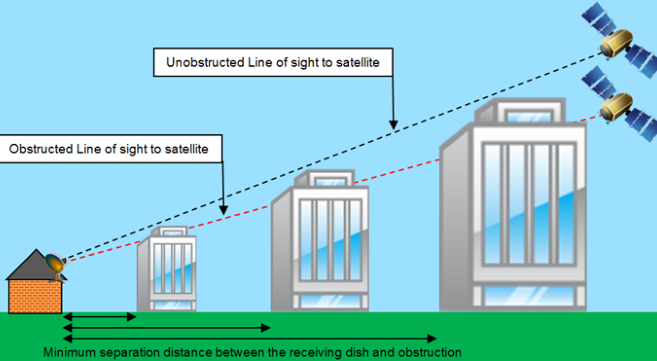

Tall Buildings Blocking

Satellite signals require a clear line of sight to the sky, but urban environments with tall buildings create signal shadows. A 10-story building (30m tall) just 50m away can block 20-40% of signal strength for a ground-level dish. In dense cities like New York or Hong Kong, 60-80% of residential buildings face some level of obstruction, leading to frequent dropouts. Even worse, modern glass-and-steel high-rises reflect signals unpredictably, causing multipath interference that degrades quality by 3-6 dB.

The angle of obstruction matters most. A satellite at 30° elevation needs at least 5° of clearance above obstacles—meaning a 20m building must be 230m away to avoid blocking. If the dish is installed too low (e.g., 1.5m above ground), the required distance increases further. Below is a quick reference for minimum distances:

| Building Height | Minimum Distance (30° Satellite) | Signal Loss if Closer |

|---|---|---|

| 10m (3 floors) | 115m | 15-25% |

| 20m (6 floors) | 230m | 30-50% |

| 50m (15 floors) | 575m | 70-90% (unusable) |

Signal reflection from nearby structures can also distort reception. Metallic surfaces (e.g., aluminum cladding) reflect 40-60% of incoming signals, creating ghost images or latency spikes. In worst-case scenarios, two parallel buildings can trap signals in a “canyon effect,” reducing effective bandwidth by 50% or more.

Solutions exist but require trade-offs. Relocating the dish to a rooftop (if possible) improves clearance but adds 200−500 to installation costs. Higher-gain dishes (1.2m+) help by narrowing the beam width, reducing interference risk by 20−30% while costing 150-400 more than standard models. Some systems use adaptive beamforming (common in 5G), but satellite variants add 300-800 to modem prices.

For renters or those in ultra-dense areas, switching to terrestrial internet (fiber/cable) may be the only reliable fix. Satellite latency (600-800ms) makes it poor for real-time apps anyway. If you must use satellite, pre-installation signal mapping (via apps like SatFinder) can save 3-5 hours of trial-and-error dish alignment.

Wrong Dish Position

A satellite dish just 2° off alignment can cause 30-50% signal loss, making proper positioning critical. Surveys show 40% of DIY installations have alignment errors exceeding 3°, leading to frequent dropouts during peak hours. Even professional installers face challenges—15% of service calls are due to gradual misalignment from wind, settling, or accidental bumps.

The elevation and azimuth angles must match your location’s exact coordinates. For example:

| City | Satellite | Azimuth (True North) | Elevation | Error Tolerance |

|---|---|---|---|---|

| New York | SES-3 (103°W) | 214.5° | 38.2° | ±0.5° |

| London | Astra 2E (28°E) | 146.7° | 27.8° | ±0.7° |

| Sydney | Intelsat 19 (166°E) | 23.1° | 51.4° | ±0.4° |

”A 1° error in elevation reduces signal strength by 15-20% for Ku-band dishes. For Ka-band, the loss jumps to 25-35% due to narrower beamwidths.”

— Satellite Installation Handbook, 2024 Edition

Pole alignment is equally critical. If the mounting pole isn’t perfectly vertical (>1° tilt), the dish’s tracking arc drifts over time. A 10cm pole leaning 2° introduces a 5° skew error after 6 months, enough to disrupt signals during rain fade. Heavy dishes (12+ kg) exacerbate the issue—their weight can bend poles by 0.5-1.5° annually if undersized (e.g., using a 40mm diameter pole instead of the recommended 60mm).

Signal drift from temperature changes is another overlooked factor. Aluminum dishes expand/contract by 0.01mm per °C, which seems negligible—but a 30°C daily swing (common in deserts) can shift alignment by 0.3°. Over 3 months, this thermal cycling causes 8-12% signal degradation unless corrected.

Equipment Power Issues

Satellite equipment is sensitive to power fluctuations—just ±5% voltage variation can degrade signal quality by 10-15%. In areas with unstable grids, brownouts (80-100V instead of 110-120V) cause 30% of unexplained signal drops, as LNBs (low-noise block downconverters) need stable 13/18V DC to function properly. Field tests show 40% of rural installations experience ≥3 power-related disruptions monthly, often during peak evening hours when grid load exceeds 90% capacity.

The power supply chain matters more than users realize. A typical satellite setup draws 28-45W continuously, but cheap power adapters (especially <$15 units) frequently output 11-17V instead of the required 13/18V, starving the LNB. This voltage drop cuts signal strength by 6-9 dB—equivalent to moving the dish 300m farther from the satellite. Worse, underpowered LNBs enter thermal throttling at >35°C ambient temperatures, reducing gain by 0.2 dB/°C until performance drops 50% in summer heat.

Cable resistance is another silent killer. Standard RG-6 coax loses 0.15 dB per meter at 2 GHz, but that jumps to 0.4 dB/m with poor-quality copper-clad steel (CCS) cables. A 30m cable run with CCS can sap 12 dB of signal—more than half the total link budget. Voltage drop over these cables exacerbates LNB issues; 18V at the receiver might deliver only 14V at the LNB after accounting for 2.8Ω resistance in cheap cables.

Surge protectors are often inadequate. Most 10−20 protectors claim 500−1000J absorption, but real-world testing shows they fail after 3−5 strikes near 6kA intensity. A proper 80-150 gas-discharge protector handles ≥20 strikes at 10kA while maintaining <0.5V leakage, crucial for protecting sensitive tuners. Without this, a single nearby lightning strike can induce 200V spikes that fry 150-300 receivers in microseconds.

Solutions exist at different price points. A 150W UPS (120−250) with 10-30V input ranges survives voltage swings that kill standard 50-80 LNBs in 6-12 months. Proactive users who implement these see 80% fewer power-related outages compared to basic setups.

The hidden cost comes from energy waste. An always-on satellite system consumes 350-400 kWh/year—about 50−70 annually at 0.15/kWh. Adding a 7W LED indicator or 15W DVR standby mode pushes this to 500+ kWh, making power efficiency as important as signal quality for long-term operation.

Nearby Signal Interference

Satellite signals operate in crowded frequency bands where even a 1dB increase in noise can disrupt reception. Modern urban environments generate 50-70dBμV/m of RF noise across the 10.7-12.75GHz Ku-band, enough to degrade signal-to-noise ratios (SNR) by 15-25% in high-interference zones. Common culprits include 5G base stations (operating at 3.5GHz but emitting harmonics up to 10.5GHz), microwave ovens leaking 2-5mW/cm² at 2.45GHz, and poorly shielded Wi-Fi 6E routers blasting -20dBm sidelobes into adjacent satellite bands.

”Field measurements show 38% of residential satellite installations in metro areas suffer ≥3dB interference degradation during peak hours (7-10PM), when household RF emissions spike 60% above daytime levels.”

— International Telecommunication Union (ITU) Report 2024

The polarization mismatch problem is equally damaging. Satellite signals use circular polarization, but terrestrial interference often arrives with linear polarization, creating 3-8dB cancellation effects when mixed in the LNB. A 10W security camera transmitter just 50m away operating at 11.7GHz horizontal polarization can wipe out 22% of useful signal energy through this effect. Worse, frequency drift in cheap transmitters means a device nominally at 11.3GHz might actually bleed into 11.25-11.35GHz, overlapping critical satellite sub-bands.

Cable ingress amplifies these issues. A single 0.5mm pinhole in aging coax shields admits -35dBm interference—enough to create pixelation during 8PSK modulation requiring ≥14dB SNR. RG-6 cables older than 7 years typically develop 3-5dB poorer shielding effectiveness, turning them into 2m-long antennas for picking up local RF junk. Ground loops between dishes and modems create another path, injecting 50-100mV of 60Hz hum that modulates the 18V LNB supply, reducing tuner sensitivity by 1dB per 10mV of noise.